Editorial responsibilities : Direction de la collection "Droit et Économie", L.G.D.J. - Lextenso éditions (30)

🌐follow Marie-Anne Frison-Roche on LinkedIn

🌐subscribe to the Newsletter MAFR. Regulation, Compliance, Law

____

► Full Reference: M.-A. Frison-Roche (ed.), Contentieux Systémique Émergent (Emerging Systemic Litigation), Paris, LGDJ, "Droit & Économie" Serie, to be published

____

📚Consult all the other books of the Serie in which this book is published

____

► General Presentation of the Book :

____

TABLE OF CONTENTS

Thesaurus : Doctrine

► Référence complète : A. Nicollet, "Le Droit de la Compliance, clé de voute de la Régulation de l’intelligence artificielle", in in P. Bonis et L. Castex (dir.), Compliance et nouvelles régulations, Annales des Mines, coll. "Enjeux numériques", juin 2025.

____

____

► Voir la présentation d'articles publiés dans le même numéro :

- Pierre Bonis & Marie-Anne Frison-Roche,📝 Réguler le numérique ou Sisyphe heureux

- M.-A. Frison-Roche,📝 Le Droit de la compliance, voie royale pour réguler l'espace numérique

________

Thesaurus : Doctrine

► Full Reference: R. Sève, "Compliance Obligation and changes in Sovereignty and Citizenship", in M.-A. Frison-Roche (ed.), Compliance Obligation, Journal of Regulation & Compliance (JoRC) and Bruylant, "Compliance & Regulation" Serie, to be published

____

📘read a general presentation of the book, Compliance Obligation, in which this article is published

____

► Summary of the article (done by the Journal of Regulation & Compliance - JoRC):

The contribution describes "les changements de philosophie du droit que la notion de compliance peut impliquer par rapport à la représentation moderne de l’Etat assurant l’effectivité des lois issues de la volonté générale, dans le respect des libertés fondamentales qui constituent l’essence du sujet de droit." ("the changes in legal philosophy that the notion of Compliance may imply in relation to the modern representation of the State ensuring the effectiveness of laws resulting from the general will, while respecting the fundamental freedoms that constitute the essence of the subject of law").

The contributor believes that the definition of Compliance is due to authors who « jouer un rôle d’éclairage et de structuration d’un vaste ensemble d’idées et de phénomènes précédemment envisagés de manière disjointe. Pour ce qui nous occupe, c’est sûrement le cas de la théorie de la compliance, développée en France par Marie-Anne Frison-Roche dans la lignée de grands économistes (Jean-Jacques Laffont, Jean Tirole) et dont la première forme résidait dans les travaux bien connus de la Professeure sur le droit de la régulation. » ( "play a role in illuminating and structuring a vast set of ideas and phenomena previously considered in a disjointed manner. For our purposes, this is certainly the case with the theory of Compliance, developed in France by Marie-Anne Frison-Roche in the tradition of great economists (Jean-Jacques Laffont, Jean Tirole) and whose first form was in her well-known work on Regulatory Law").

Drawing on the Principles of the Law of the American Law Institute, which considers compliance to be a "set of rules, principles, controls, authorities, offices and practices designed to ensure that an organisation conforms to external and internal norms", he stresses that Compliance thus appears to be a neutral mechanism aimed at efficiency through a move towards Ex Ante. But he stresses that the novelty lies in the fact that it is aimed 'only' at future events, by 'refounding' and 'monumentalising' the matter through the notion of 'monumental goals' conceived by Marie-Anne Frison-Roche, giving rise to a new jus comune. Thus, "la compliance c’est l’idée permanente du droit appliquée à de nouveaux contextes et défis." ("Compliance is the permanent idea of Law applied to new contexts and challenges").

So it's not a question of making budget savings, but rather of continuing to apply the philosophy of the Social Contract to complex issues, particularly environmental issues.

This renews the place occupied by the Citizen, who appears not only as an individual, as in the classical Greek concept and that of Rousseau, but also through entities such as NGOs, while large companies, because they alone have the means to pursue the Compliance Monumental Goals, would be like "super-citizens", something that the digital space is beginning to experience, at the risk of the individuals themselves disappearing as a result of "surveillance capitalism". But in the same way that thinking about the Social Contract is linked to thinking about capitalism, Compliance is part of a logical historical extension, without any fundamental break: "C’est le développement et la complexité du capitalisme qui forcent à introduire dans les entités privées des mécanismes procéduraux d’essence bureaucratique, pour discipliner les salariés, contenir les critiques internes et externes, soutenir les managers en place" ("It is the development and complexity of capitalism that forces us to introduce procedural mechanisms of a bureaucratic nature into private entities, in order to discipline employees, contain internal and external criticism, and support the managers in place") by forcing them to justify remuneration, benefits, and so on.

Furthermore, in the words of the author, "Avec les buts monumentaux, - la prise en compte des effets lointains, diffus, agrégés par delà les frontières, de l’intérêt des générations futures, de tous les êtres vivants - , on passe, pour ainsi dire, à une dimension industrielle de l’éthique, que seuls de vastes systèmes de traitement de l’information permettent d’envisager effectivement." ("With the Monumental Goals - taking into account the distant, diffuse effects, aggregated across borders, the interests of future generations, of all living beings - we move, so to speak, to an industrial dimension of ethics, which only vast information processing systems can effectively envisage").

This is how we can find a division between artificial intelligence and human beings in organisations, particularly companies, or in decision-making processes.

In the same way, individual freedom does not disappear with Compliance, because it is precisely one of its monumental goals to enable individuals to make choices in a complex environment, particularly in the digital space where the democratic system is now at stake, while technical mechanisms such as early warning will revive the right to civil disobedience, invalidating the complaint of "surveillance capitalism".

The author concludes that the stakes are so high that Compliance, which has already overcome the distinctions between Private and Public Law and between national and international law, must also overcome the distinction between Information and secrecy, particularly in view of cyber-risks, which requires the State to develop and implement non-public Compliance strategies to safeguard the future.

____

🦉This article is available in full text to those registered for Professor Marie-Anne Frison-Roche's courses

________

May 14, 2026

Questions of Law

May 14, 2026

Questions of Law

Feb. 3, 2026

Questions of Law

Nov. 19, 2025

Thesaurus : Doctrine

► Référence complète : B. Frydman, "Interprétation et numérisation", in Cahiers du Conseil constitutionnel, Les méthodes d'interprétation, nov. 2025.

____

____

📗Lire l'ensemble des contributions

________

Oct. 16, 2025

Thesaurus : Doctrine

► Référence complète : W. Marx, "La fabrique de l'intelligence : du mot à la chose", in Formes de l'intelligence, Collège de France, 16 octobre 2025

____

► Voir le colloque Formes de l'intelligence, dans lequel la conférence s'insère.

____

____

►Résumé par l'auteur : "Le concept d’intelligence prend une place de plus en plus importante au fil du XIXe siècle dans la réflexion anthropologique et philosophique européenne, s’imposant contre des concurrents tels que l’esprit et l’entendement. Il concourt à une biologisation et une naturalisation de la réflexion historique (Comte). Par le biais de la théorie de l’évolution, le terme instaure une dialectique entre l’individu et le collectif (Spencer, Galton, Ribot). Il prend une dimension politique (Maurras, Benda). On assiste concurremment à des entreprises de dépersonnalisation et de formalisation du problème de l’intelligence (Taine, Binet, Valéry) qui ouvrent la voie aux théories de l’intelligence artificielle."

____

►Notes prises : Rappelant que "nommer, c'est construire le monde", l'orateur étudie le mot en tant qu'il est situé au XIXième pour viser l'entendement, à savoir "l'acte de comprendre, puis la faculté de comprendre". Par métonymie, une I.A. est une machine dotée de cette faculté, comme on disait des anges qu'ils sont des "intelligences divines". Puis l'accord entre deux personnes.

D'une façon concurrente, la "raison" exprime la pensée (qui s'oppose au coeur) et sa façon de s'exprimer. De la même façon, "l'entendement" renvoie à la compréhension.

Si l'intelligence a triomphé, c'est pour une raison grammaticale, car ce substantif appelle des relations avec des objets et des êtres. Pensant l'être humain comme un être en relations, ce mot triomphe dès l'instant qu'à partir du XVIIIième on se demande comment l'être humain peut se penser sans Dieu, mais donc en relation, par cette faculté transitive qu'est l'intelligence. Mais dès qu'elle cesse d'être exclusivement humaine, l'animal-machine pouvant être récusé, le problème de la définition se pose.

L'intelligence se pose alors comme une capacité à construire pour corriger les erreurs de perception, capacité qui peut être améliorée à travers une science de produire des grands hommes. La tendance est alors de penser "l'intelligence sociale" (l'intelligence française, etc.) sur le modèle de l'intelligence de l'individu : l'intelligence devient un objet de bataille politique.

Le XIXième puis le XXième siècles pose que la faculté que l'intelligence exprime n'est pas nécessairement la marque de l'être humain. Le XXième siècle en vit peut-être l'expérience.

________

Oct. 15, 2025

Thesaurus : Doctrine

► Référence complète : C.S. Sunstein, Imperfect Oracle: What AI Can and Cannot Do, Université of Penn Press, 2025, 208 p.

____

► Résumé de l'ouvrage (fait par l'éditeur") : 'Imperfect Oracle is about the promise and limits of artificial intelligence. The promise is that in important ways AI is better than we are at making judgments. Its limits are evidenced by the fact that AI cannot always make accurate predictions—not today, not tomorrow, and not the day after, either.

Natural intelligence is a marvel, but human beings blunder because we are biased. We are biased in the sense that our judgments tend to go systematically wrong in predictable ways, like a scale that always shows people as heavier than they are, or like an archer who always misses the target to the right. Biases can lead us to buy products that do us no good or to make foolish investments. They can lead us to run unreasonable risks, and to refuse to run reasonable risks. They can shorten our lives. They can make us miserable.

Biases present one kind of problem; noise is another. People are noisy not in the sense that we are loud, though we might be, but in the sense that our judgments show unwanted variability. On Monday, we might make a very different judgment from the judgment we make on Friday. When we are sad, we might make a different judgment from the one we would make when we are happy. Bias and noise can produce exceedingly serious mistakes.

AI promises to avoid both bias and noise. For institutions that want to avoid mistakes it is now a great boon. AI will also help investors who want to make money and consumers who don’t want to buy products that they will end up hating. Still, the world is full of surprises, and AI cannot spoil those surprises because some of the most important forms of knowledge involve an appreciation of what we cannot know and why we cannot know it. Life would be a lot less fun if we could predict everything."

Oct. 2, 2025

Thesaurus : Doctrine

► Full Reference: R. Sève, "L'Obligation de Compliance et les mutations de la souveraineté et de la citoyenneté" ("Compliance Obligation and changes in Sovereignty and Citizenship"), in M.-A. Frison-Roche (ed.), L'obligation de Compliance, Journal of Regulation & Compliance (JoRC) and Dalloz, coll. "Régulations & Compliance", 2025, pp.97-107.

____

📕read the general presentation of the book, L'obligation de Compliance, in which this article is published.

____

► English Summary of this article (done by the Journal of Regulation & Compliance - JoRC) : The contribution describes "les changements de philosophie du droit que la notion de compliance peut impliquer par rapport à la représentation moderne de l’Etat assurant l’effectivité des lois issues de la volonté générale, dans le respect des libertés fondamentales qui constituent l’essence du sujet de droit." ("the changes in legal philosophy that the notion of Compliance may imply in relation to the modern representation of the State ensuring the effectiveness of laws resulting from the general will, while respecting the fundamental freedoms that constitute the essence of the subject of law").

The contributor believes that the definition of Compliance is due to authors who « jouer un rôle d’éclairage et de structuration d’un vaste ensemble d’idées et de phénomènes précédemment envisagés de manière disjointe. Pour ce qui nous occupe, c’est sûrement le cas de la théorie de la compliance, développée en France par Marie-Anne Frison-Roche dans la lignée de grands économistes (Jean-Jacques Laffont, Jean Tirole) et dont la première forme résidait dans les travaux bien connus de la Professeure sur le droit de la régulation. » ( "play a role in illuminating and structuring a vast set of ideas and phenomena previously considered in a disjointed manner. For our purposes, this is certainly the case with the theory of Compliance, developed in France by Marie-Anne Frison-Roche in the tradition of great economists (Jean-Jacques Laffont, Jean Tirole) and whose first form was in her well-known work on Regulatory Law").

Drawing on the Principles of the Law of the American Law Institute, which considers compliance to be a "set of rules, principles, controls, authorities, offices and practices designed to ensure that an organisation conforms to external and internal norms", he stresses that Compliance thus appears to be a neutral mechanism aimed at efficiency through a move towards Ex Ante. But he stresses that the novelty lies in the fact that it is aimed 'only' at future events, by 'refounding' and 'monumentalising' the matter through the notion of 'monumental goals' conceived by Marie-Anne Frison-Roche, giving rise to a new jus comune. Thus, "la compliance c’est l’idée permanente du droit appliquée à de nouveaux contextes et défis." ("Compliance is the permanent idea of Law applied to new contexts and challenges").

So it's not a question of making budget savings, but rather of continuing to apply the philosophy of the Social Contract to complex issues, particularly environmental issues.

This renews the place occupied by the Citizen, who appears not only as an individual, as in the classical Greek concept and that of Rousseau, but also through entities such as NGOs, while large companies, because they alone have the means to pursue the Compliance Monumental Goals, would be like "super-citizens", something that the digital space is beginning to experience, at the risk of the individuals themselves disappearing as a result of "surveillance capitalism". But in the same way that thinking about the Social Contract is linked to thinking about capitalism, Compliance is part of a logical historical extension, without any fundamental break: "C’est le développement et la complexité du capitalisme qui forcent à introduire dans les entités privées des mécanismes procéduraux d’essence bureaucratique, pour discipliner les salariés, contenir les critiques internes et externes, soutenir les managers en place" ("It is the development and complexity of capitalism that forces us to introduce procedural mechanisms of a bureaucratic nature into private entities, in order to discipline employees, contain internal and external criticism, and support the managers in place") by forcing them to justify remuneration, benefits, and so on.

Furthermore, in the words of the author, "Avec les buts monumentaux, - la prise en compte des effets lointains, diffus, agrégés par delà les frontières, de l’intérêt des générations futures, de tous les êtres vivants - , on passe, pour ainsi dire, à une dimension industrielle de l’éthique, que seuls de vastes systèmes de traitement de l’information permettent d’envisager effectivement." ("With the Monumental Goals - taking into account the distant, diffuse effects, aggregated across borders, the interests of future generations, of all living beings - we move, so to speak, to an industrial dimension of ethics, which only vast information processing systems can effectively envisage").

This is how we can find a division between artificial intelligence and human beings in organisations, particularly companies, or in decision-making processes.

In the same way, individual freedom does not disappear with Compliance, because it is precisely one of its monumental goals to enable individuals to make choices in a complex environment, particularly in the digital space where the democratic system is now at stake, while technical mechanisms such as early warning will revive the right to civil disobedience, invalidating the complaint of "surveillance capitalism".

The author concludes that the stakes are so high that Compliance, which has already overcome the distinctions between Private and Public Law and between national and international law, must also overcome the distinction between Information and secrecy, particularly in view of cyber-risks, which requires the State to develop and implement non-public Compliance strategies to safeguard the future.

________

🦉Cet article est accessible en texte intégral pour les personnes inscrites aux enseignements de la Professeure Marie-Anne Frison-Roche

June 20, 2025

Thesaurus : Doctrine

Référence complète : Haut Comité Juridique de la Place de Paris, Les impacts juridiques et réglementaires de l'Intelligence Artificielle en matière bancaire, financière et des assurances, juin 2025.

_____

April 28, 2025

Thesaurus : Doctrine

► Référence complète : Cour de cassation, Préparer la Cour de cassation de demain. Cour de cassation et intelligence artificielle, rapport, avr. 2025, 159 p.

____

________

March 11, 2025

Conferences

🌐follow Marie-Anne Frison-Roche sur LinkedIn

🌐subscribe to the Newsletter MAFR Regulation, Compliance, Law

🌐subscribe to the Video Newslette MAFR Surplomb

____

► Full Reference : M.-A. Frison-Roche, "Le juriste, requis et bien placé pour le futur" (The lawyer needed and well placed for the future), in Groupe Lamy Liaisons, Les Éclaireurs du Droit, Hôtel de l’Industrie, Place Saint Germain des Près, Paris, 11 March 2025, 16h.

____

This speech opens a series of 4 workshops on the following themes:

- The challenge of Trust

- The challenge of Risk

- The challenge of Transmission

- The challenge of leadership

____

🧮see the full programme of this manifestation (in French)

____

⬜see the slides basis made for this speech (which were not projected) (in French)

____

🎥 watch the short video made after the conference (in French)

____

► English Summary of this introductory conference: The 4 sessions will address the successive themes of trust, risk, transmission and leadership, which legal professionals are facing, particularly as a result of algorithms.

For an introductory analysis, it is possible to make a distinction inside the Future.

The future has a part of Stability: the jurist can contribute to this stability, i.e. the preservation of the past (I).

The future has an part of Predictability: the lawyer must increase this part in the present itself (II).

The future has a part of radical novelty (III): at this point, which may correspond to a precipice, if no one had imagined it, the lawyer can also be there. Until now, we think of lawyers more in the first 2 hypotheses, less in this one. Is it pertinent?

In each of these dimensions, the algorithmic system (AI) is presented as replacing or dominating the human.

In each of these 3 dimensions, Lawyers must be present, as they form a community that must remain united around the very idea of Law (algorithms do not conceive ideas, it is humans who transmit them to other humans, and the algorithmic system must remain a medium).

As far as the Stability of the future is concerned, the Lawyer can and must contribute to it, in particular through Transmission, because there is less of a blank page as algorithmic 'creation' is based on past data, and training, where the human being will be all the more central as machines have to be handled.

As far as the Predictability of the future is concerned, it is a question of assessing the Risks, whether specific or systemic, legal or non-legal, in order not to take them or on the contrary to take them. The more the Lawyer is involved in risk-taking, the more he or she will be in the right place, before and during the action.

As far as the Radically New future is concerned, it is not easy to qualify AI as such or not, but now the possible disappearance of the Rule of Law in the United States is one of them. All Lawyer are expected. Every lawyer must have two virtues (which the algorithm cannot not have): the virtue of Justice and the virtue of Courage. It is these virtues that we must pass on and share.

____

Current events have led me to devote the time available to me to focusing on a single perspective, the third, to say what is expected of Lawyers if we perceive something radically new in the near future, what everyone does.

Indeed, in the United States, on the one hand there is a head of state for whom the Law does not exist and who uses the power of regulation to express his absolute indifference to other states, companies and human beings, and on the other an entrepreneur who claims that he is going to become the master of algorithmic technology, a system over which he already wields great power.

Faced with this Radical Novelty, we expect the community of Lawyers, all lawyers, whatever their place, their technical mastery, their level, their nationality, to speak out and say No. As Kelsen, Cassin or Ginsberg did. Say No and help others to say No. To do this, Lawyers, as human beings who care about other human beings, must be aware of the twofold virtue expected of them: the virtue of commitment to Justice and the virtue of Courage.

________

Jan. 28, 2025

Conferences

🌐suivre Marie-Anne Frison-Roche sur LinkedIn

🌐s'abonner à la Newsletter MAFR. Regulation, Compliance, Law

🌐s'abonner à la Newsletter Surplomb, par MAFR

____

► Référence complète : M.-A. Frison-Roche, "Juger une situation familiale, une "obligation impossible"", in Collège de Droit de l'Université Panthéon-Sorbonne (Paris I), Dialogue avec Éliette Abécassis autour de son roman Divorce à la française, Amphi Turgot, Sorbonne, 28 janvier 2025, 20h-21h30, Paris.

____

🪑🪑🪑Cette conférence a été ouverte par Philippe Stoffel-Munck, co-directeur du Collège de Droit de l'Université Panthéon-Sorbonne (Paris I), qui a présenté les parcours, travaux et personnalités d'Éliette Abécassis et de moi-même.

Puis, selon le principe du dialogue, Éliette Abécassis a présenté trois points d'un point de vue littéraire et philosophique sur lesquels elle m'a demandé d'exprimer ma perspective.

- Le premier point portait sur la procédure, les caractères contradictoires des discours des uns et des autres, la place de la vérité dans une procédure de divorce et la place de la vérité.

- Le deuxième point a porté sur la difficulté de juger, sur l'impossibilité même de juger, son roman étant construit pour mettre le lecteur dans la position qui est celle du juger : comment arriver à juger ?

- Le troisième point a porté sur le caractère "profondément humain" des divorces et du jugement de ceux-ci et, en conséquence, de ce qui donnerait l'application de ladite intelligence artificielle en la matière.

Selon la méthode convenue entre nous, n'ayant pas été prévenue du choix de ces perspectives-là mais connaissant bien Éliette Abécassis et son oeuvre, j'ai donc développé "sur le banc" les points suivants pour les articuler à l'auditoire composé d'étudiants en droit en 1ière, 2ième et 3ième année :

____

► Présentation de mes réponses aux questions ouvertes par Eliette Abécassis dans ce dialogue : 🔴Éliette a montré comment dans Divorce à la français, elle a fait parlé de multiples personnes impliquées dans la procédure de divorce qui font des récits contradictoires, proposant des vérités qui se contredisent, reprenant comme trame du roman la procédure elle-même. Les vérités multiples sont ainsi confrontées, notamment celle de la littérature et celle du Droit.

I. LE PRINCIPE DU CONTRADICTOIRE, LA VÉRITÉ, LES PARTIES ET LE JUGE

La procédure est effectivement gouvernée par le "principe du contradictoire". Pour les parties au litige, il ne s'agit pas particulièrement de participer à la recherche de la vérité : une partie dans un procès veut gagner, c'est-à-dire notamment que son adversaire perde. Le débat et son alimentation notamment en éléments de preuve a pour bénéficiaire principe le juge. D'ailleurs et à ce titre le principe du contradictoire se démarque des droits de la défense, en ce que ceux-ci n'ont pas toujours pour objectif la vérité mais sont des prérogatives, de plus haut niveau dont les personnes sont titulaires parce qu'elles sont en risque dans la perspective de la décision susceptible d'être prise. Elles peuvent ainsi se défendre, par exemple en mentant, ou en se taisant. Les autorités sont donc davantage favorables au contradictoire, principe qui fonctionne en leur faveur, qu'aux droits de la défense, droits subjectifs qui leur sont parfois opposées. Parce que le juge est gardien de l'État de droit, il concrétise le contradictoire mais aussi les droits de la défense. Parce que la vérité peut aussi être un argument, elle peut aussi alimenter défense et débat mais gardons en tête cette opposition de départ qui fonde le Droit processuel, que Divorce à la française illustre.

🔴Le deuxième point est sur la difficulté de juger. Éliette Abécassis souligne la difficulté de juger qui est d'autant plus pointée dans son roman que le juge est à la fois omniprésent qu'il est le seul à ne pas prendre la parole. C'est donc le lecteur qui est institué juge. Il perçoit lui-même à travers son expérience de lecteur la difficulté de juger, mais aussi l'importance de juger. Elle se réfère notamment notamment aux travaux de Paul Ricoeur sur l'enjeu du jugement et du juste.

II. LE DIFFICILE ART DE JUGER, OBLIGATION IMPOSSIBLE

Cela m'a fait penser à l'ouvrage publié avec un ami très cher qui étudia avec moi dans ce même Amphi Turgot la philosophie pour une licence de philosophie, ouvrage ayant pour titre La justice. L'obligation impossible. Il est "impossible" de juger, parce qu'il est "impossible" d'être juste.

Faut-il donc se détourner de cet office-là ? De cette prétention-là ? Non, car si la justice, comme la vérité, est un point que nul ne peut atteindre, alors que la Justice est une vertu qui contient toutes les autres et en cela si nous ne sommes pas justes nous n'avons plus aucune vertu (par exemple la vertu du courage), il convient (comme le fait tout juge) partir des situations.

Les situations sont injustes. Etre juste, c'est d'abord être sensible, être perspicace à l'intensité d'injustice de telle ou telle situation. C'est déjà ça. Puis, c'est agir. C'est-à-dire la dire, ce qui est déjà un premier jugement. Puis la trancher, la réparer, la consoler. C'est ainsi que l'on peut être juste. C'est sans doute pour cela que l'on devient juge. Notamment lorsqu'il s'agit des situations familiales.

🔴Éliette insiste sur la violence des conflits qui s'exprime aussi dans les procédures de divorce et que son roman met en scène. Cette instabilité des rapports humains correspond à une société qui est en train de "liquéfier" les rapports entres les êtres humains, et bientôt les êtres humains eux-mêmes. Elle s'inquiète de ce que va produire sur la justice humaine l'usage de l'intelligence artificielle.

III. LES ALGORITHMES, APPUI OU DESTRUCTION DE L'OFFICE DU JUGE

Le troisième point porte donc sur la pertinence, légitimité et efficacité de l'usage des algorithmes dans les contentieux de divorce. Il est tentant de répondre en bloc que le système algorithmique sans âme ne doit pas toucher ce contentieux-là car pour reprendre les mots d'Eliette Abécassis, il est "profondément humain" et donc seul un juge humain peut y toucher. Mais il faut aussi considérer que la procédure, dont on a montré tout à l'heure la dimension humaniste à travers le contradictoire et les droits de la défense, est une machinerie, avec des délais et des séries d'actes de procédure que des algorithmes aident à mener et à contrôler.

La procédure c'est par nature du temps, et plus exactement de la durée, du temps qui passe. Il faut que la dispute ait le temps de s'apaiser. Faire durer peut aussi l'exacerber. Les outils algorithmiques peuvent permettre aux parties de se libérer, d'en finir. Il ne s'agit pas seulement d'une logique de gestion de flux vue du côté de l'institution mais aussi de justice pour les parties en litige qui peuvent en être libérées grâce à ces outils-là. Temps utile, délai raisonnable, sont aussi des garanties de procédure.

L'enjeu est alors d'avoir du discernement sur deux discernements. En premier lieu en distinguant ce qui relève de l'intendance procédurale que le système algorithmique et ce qui relève du choix qui doit être laissé au juge et aux parties. En second lieu, en distinguant ce qui dans les différents cas est identique malgré la singularité (définition de ce qu'est l'analogie) et se prêtent donc à la puissance algorithmique et qui n'est pas analogue. L'analogie est l'art même du juriste.

_____

Oct. 1, 2024

MAFR TV : MAFR TV - Overhang

🌐suivre Marie-Anne Frison-Roche sur LinkedIn

🌐s'abonner à la Newsletter MAFR. Regulation, Compliance, Law

🌐s'abonner à la Newsletter Surplomb, par MAFR

____

► Référence complète : M.-A. Frison-Roche, "Du Droit de la Compliance découle le Droit de l'Intelligence Artificielle", in série de vidéos Surplomb, 1er octobre 2024ré

____

🌐visionner sur LinkedIn cette vidéo de la série Surplomb

____

____

🎬visionner ci-dessous cette vidéo de la série Surplomb⤵️

____

Surplomp, par mafr

la série de vidéos dédiée à la Régulation, la Compliance et la Vigilance

Updated: Sept. 15, 2024 (Initial publication: )

Law by Illustrations

► Référence complète : M.-A. Frison-Roche., "🎬𝑰'𝒎 𝒚𝒐𝒖𝒓 𝒎𝒂𝒏 - une question à laquelle réponse est demandée : la loi doit-elle autoriser le mariage avec un robot humanoïde ?", billet 15 septembre 2024.

► voir le film-annonce

___

Ce film allemand de 2021de Maria Schrader a reçu le Lion .. à la Nostra de Venise

Il est une fable sur l'intelligence artificielle dans notre société, maintenant et demain.

Dans une société du futur, d'un futur proche, une entreprise (on ne sait pas laquelle) propose à des humains de tester des robots humanoïdes pendant 3 semaines, comme "compagnon idéal", au terme duquel l'humain fournit un rapport pour formuler une opinion argumentée en répondant à la question suivante : Le législateur doit-il changer la loi pour admettre un mariage entre un humain et l'humanoïde.

La fable se déroule entre une femme, Alma, qui accepte de faire cette expérience. Elle n'est pas rémunérée et ne travaille pas dans l'I.A., elle travaille sur la culture perse et le déchiffrage de l'écriture cunéiforme. Son patron lui indique que si elle accepte l'expérience, des crédits seront sans doute débloqués pour permettre à son équipe de poursuivre ses recherches sur ce sujet-là. Il précise, en riant : "bien sûr, je ne cherche en rien à te corrompre".

Se présente donc le "compagnon idéal" réalisé selon ses goûts. Il a par exemple un accent britannique (elle est universitaire, elle apprécie la distinction d'un accent britannique, etc.)

Pendant 3 semaines, le robot parfait s'adapte, et elle a bien du mal mais trouve petit à petit qu'il est vraiment parfait, elle s'ouvre petit à petit.

Elle a beaucoup de soucis. Vit complètement seule. Son mari vient de la quitter pour une autre. Dont elle apprend qu'elle attend un enfant, tandis qu'elle a perdu un enfant jadis et n'en a jamais eu par la suite. Son père est atteint d'un Alzheimer en stade avancé et elle a bien du mal à l'aider.

Tandis que le robot, Tom, fait tout parfaitement, mais cela ne va jamais vraiment bien, puisqu'il ne sent pas, n'a pas froid, n'a pas faim, n'a pas peur. Il peut tout calculer, c'est commode. Elle se sent seule et veut arrêter car plus elle le voit et plus elle se sent à la fois seule et attirer par ce compagnon idéal qui lui appartient : I'm your man.

C'est vrai, c'est tentant.

A aucun moment, on ne voit ni ne parle de l'entreprise qui fabrique les algorithmes et les robots, qui sont manifestement au stade expérimental.

Elle rencontre dans la rue un homme qu'elle avait rencontré précédemment : il est juriste et tient à son bras une jeune fille, souriante, jolie, qui le regarde avec affection. Il lui dit : vous en êtes où ? Moi, j'ai été sollicité parce que je suis juriste. Pour donner une opinion juridique. Mais je suis si heureux ! Avant personne ne voulait de moi. Peut-être pour une histoire de pheromones ou pour mon physique (il est un peu gros) ou parce que j'ai 62 ans. Et maintenant je suis si heureux avec elle ! Je suis en train de négocier pour la garder toujours ! Je crois que je vais arriver à obtenir cela !"

Alma rend son rapport qui tient en quelques lignes : il est le compagnon parfait, qui peut pallier notre solitude, s'ajuster à nos désirs, répondre à tout. Mais notre condition humain est d'avoir des désirs qui ne sont pas comblés, d'avoir des questions sans réponse, d'aller de l'avant. Avec de tels robots, l'humain n'ira plus de l'avant. Il ne faut exclure totalement la possibilité de mariage entre des humains et des algorithmes à forme humaine.

____

On peut mettre ce film dans la longue liste des films qui prennent ce thème essentiel.

Le film prophétique de Spielberg , Intelligence Artificiel où le robot joué par Jude Law est prostitué, où le robot principal est l'enfant tant désiré.

Le film Her où l'amour pour la suite algorithmique dont l'humain est amoureux choisit de s'ajuster plutôt à un autre algorithme.

____

Prenons cela du côté du Droit

1. La question est celle du Législateur. La réponse est claire : c'est Non.

2. L'industrie est invisible : que peut le Législateur à l'égard d'une industrie que l'on ne voit pas, que l'on entend pas, qui n'écrit pas, dont les représentants ne sont eux-mêmes que des androïdes ?

3. La question expressément posée portait aussi sur l'éthique. Après, l'on n'a plus aucune discussion et aucune réponse : quand on n'a pas d'interlocuteur, il est difficile de formuler.

4. Le lien entre les savants et l'industrie est clairement posé ; il n'est pas choquant en soi

5. Le juriste, sollicité sur la dimension juridique, est celui qui l'aborde le mieux, celui qui veut garder la "jeune fille idéale", celui qui ne se pose aucune question éthique.

Juridiquement, c'est donc une très triste fable.

____

June 28, 2024

Thesaurus : Doctrine

► Référence complète : E. Netter, "Quand la force de conviction du scoring bancaire provoque sa chute. L'interprétation extensive, par la CJUE, de la prohibition des décisions entièrement automatisées", RTD com., 2024, chron., pp.342-348

____

🦉Cet article est accessible en texte intégral pour les personnes inscrites aux enseignements de la Professeure Marie-Anne Frison-Roche

________

June 28, 2024

Thesaurus : Soft Law

► Référence complète : Autorité de la concurrence (ADLC), avis 24-A-05 du 28 juin 2024 relatif au fonctionnement concurrentiel du secteur de l’intelligence artificielle générative, juin 2024, 104 p.

____

____

📰lire le communiqué de presse accompagnant la publication de l'avis

________

June 24, 2024

Conferences

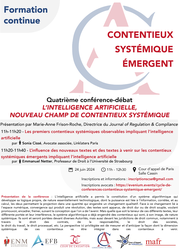

► Full Reference: M.-A. Frison-Roche, "Les deux rencontres entre l'intelligence artificielle et le Contentieux Systémique" ("The two meetings between Artificial Intelligence and Systemic Litigation"), in L’intelligence artificielle, nouveau champ de Contentieux Systémique (Artificial intelligence, new field of Systemic Litigation), in cycle of conferences-debates "Contentieux Systémique Émergent" ("Emerging Systemic Litigation"), organised on the initiative of the Cour d'appel de Paris (Paris Cour of Appeal), with the Cour de cassation (French Court of cassation), the Cour d'appel de Versailles (Versailles Court of Appeal), the École nationale de la magistrature - ENM (French National School for the Judiciary) and the École de formation des barreaux du ressort de la Cour d'appel de Paris - EFB (Paris Bar School), under the scientific direction of Marie-Anne Frison-Roche, June 24, 2024, 11am-12.30pm, Cour d'appel de Paris, Cassin courtroom.

____

🧮see the full programme of this event

____

► English Summary of the conference: In the general presentation on the theme itself, I underlined "The two meetings between Artificial Intelligence and Systemic Litigation".

The focus of this conference is not the state of what is usually called Artificial Intelligence, but rather how to correlate AI and "Emerging Systemic Litigation" (ESL).

This involves recalling what "Systemic Litigation" is (1), then looking at the contribution of Artificial Intelligence to dealing with this type of litigation (2), before considering that the algorithmic system itself can be a subject of Systemic Litigation (3).

1. What is the Systemic Litigation that we see Emerging?

On the very notion of "Emerging Systemic Litigation" (ESL), proposed in 2021, read : M.-A. Frison-Roche, 🚧The Hypothesis of the category of Systemic Cases brought before the Judge, 2021

Emerging Systemic Litigation concerns situations that are brought before the Judge and in which a System is involved. This may involve the banking system, the financial system, the energy system, the digital system, the climate system or the algorithmic system.

In this type of litigation, the interests and future of the system itself are at stake, "in the case". The judge must therefore "take them into consideration"📎

In this respect, "Emerging Systemic Litigation" must be distinguished from "Mass Litigation". "Mass litigation" refers to a large number of similar disputes. The fact that they are often of "low importance" is not necessarily decisive, as these disputes are important for the people involved and the use of A.I. must not overpower the specificity of each one. The fact remains, however, that the criterion for Systemic Litigation is the presence of a system. It may happen that a mass litigation calls into question the very interest of a system (for example, value date litigation), but more often than not the Systemic Litigation we see emerging is, unlike mass litigation, a very specific case in which one party, for example, formulates a very specific claim (e.g., asking for considerable work to be stopped) against a multinational company, and will thus "call into question" an entire value chain and the obligations incumbent on the powerful company to safeguard the climate system, which is therefore present in the proceedings (which does not, however, entitle it to make claims, but which must be taken into consideration).

2. The contribution of Algorithmic Power in the conduct of a Systemic Litigation

In this respect, AI can be a useful, if not indispensable, tool for mastering such Systemic Litigation, the emergence of which corresponds to a novelty, and the knowledge of which is brought before the Ordinary Law Judge.

Indeed, this type of litigation is particularly complex and time-consuming, with evidentiary issues at the heart of the case, and with expert appraisal following on from expert appraisal. Expert appraisals are difficult to carry out. AI can therefore be a means for the judge to control the expert dimension of Systemic Litigation, in order to curb the increased risk of experts capturing the judge's decision-making power.

The choice of AI techniques presents the same difficulties as those that have always applied to experts. It is likely that certification mechanisms, analogous to registration on expert lists, will be put in place, if we move away from construction by the courts themselves (or by the government, which may pose a problem for the independence of the judiciary), or if we want control over tools provided by the parties themselves, with regard to the principle of equality of arms due to the cost of these tools.

3. When it is the Algorithmic System itself that is the subject of a Systemic Litigation: its place is then rather in defense

Moreover, the algorithmic system itself gives rise to Systemic Litigation, in that individuals may bring a case before the courts claiming to have suffered damage as a result of the algorithmic system's operation, or seeking enforcement of a contract drawn up by the system. It is in the realm of the Ordinary Contract and Tort Law that the system may find itself involved in the jurisdictional proceedings.

It is noteworthy that, compared with the hypotheses hitherto favored in previous conference-debates, notably those of April 26, 2024 on Emerging Systemic Litigation linked to the Duty of Vigilance📎

However, the instance changes if the system is no longer presented as the potential "victim" but rather as the potential "culprit". In particular, it is much less clear what type of intervener in the proceedings, who is not necessarily a party to the dispute, should speak to explain the system's interest, particularly with regard to the sustainability and future of the AI system.

This is an area for further consideration by heads of courts.

________

🕴️Fr. Ancel, 📝Compliance Law, a new guiding principle for the Trial?, in 🕴️M.-A. Frison-Roche (ed.), 📘Compliance Jurisdictionalisation, 2024.

🧮La vigilance, nouveau champ de contentieux systémique (Vigilance, new field of Systemic Litigation), in cycle of conference-debates "Contentieux Systémique Émergent" ("Emerging Systemic Litigation"), organised on the initiative of the Cour d'appel de Paris (Paris Cour of Appeal), with the Cour de cassation (French Court of cassation), the Cour d'appel de Versailles (Versailles Court of Appeal), the École nationale de la magistrature - ENM (French National School for the Judiciary) and the École de formation des barreaux du ressort de la Cour d'appel de Paris - EFB (Paris Bar School), under the scientific direction of Marie-Anne Frison-Roche, June 24, 2024.

June 24, 2024

Organization of scientific events

► Full Reference: L’intelligence artificielle, nouveau champ de Contentieux Systémique (Artificial intelligence, new field of Systemic Litigation), in cycle of conferences-debates "Contentieux Systémique Émergent" ("Emerging Systemic Litigation"), organised on the initiative of the Cour d'appel de Paris (Paris Cour of Appeal), with the Cour de cassation (French Court of cassation), the Cour d'appel de Versailles (Versailles Court of Appeal), the École nationale de la magistrature - ENM (French National School for the Judiciary) and the École de formation des barreaux du ressort de la Cour d'appel de Paris - EFB (Paris Bar School), under the scientific direction of Marie-Anne Frison-Roche, June 24, 2024, 11am-12.30pm, Cour d'appel de Paris, Cassin courtroom.

____

🌐see on LinkedIn the report made of this event

____

► Presentation of this conference-debate: Artificial intelligence has enabled the creation of an algorithmic system. This develops its own logic, which is essentially technological in nature. It generates computing power based on the correlation of information to produce possible causalities and build probabilities that are sometimes likened to "predictions", with the mass processed eventually generating a qualitative change.

This change is linked to the digital space itself, which by its very nature gives rise to systemic disputes and litigation, to which the conference-debate on 27 May 2024 was devoted.

There are three ways in which this Emerging Systemic Litigation is involved, and it is important to anticipate these, as the litigation is either in its infancy or still to come, but will undoubtedly arise suddenly.

Firstly, it is possible that the technological tool will make it possible to deal with certain cases where the technical nature of either the concepts or the requests, or the multitude of requests, however simple, require this ability to deal with the mass, which leads to an increase in both the mechanical power of algorithms and the greater presence of human beings, in particular through the increase in adversarial proceedings, pre-trial proceedings, mediation, etc.

Secondly, in the face of this change linked to the digital environment, 'texts' have appeared to 'regulate' the use or the very invention of this or that algorithmic tool, texts of a very diverse nature, from the most soft to the hardest (this gradation between soft and hard law is the theme taken up in the conference-debate of 19 September 2024). These may be measures taken by the firms that produce the tools, those that use them, or those that disseminate them, with the people affected by the information being relatively active. This last point explains why systemic disputes are already underway, concerning the subjective rights that would be violated either by the very nature of artificial intelligence, in this case the rights of content producers, or the rights to privacy, or protection of other Monumental Goals. The systemic and extra-territorial dimension of these disputes has already been established.

The third point is the role of Politics, since the European Union, through the texts currently being adopted, has established that the Goal is not only the sustainability of the technical system, the innovation market and European sovereignty, but also the primacy of people and individuals, through a method that is the Ex Ante ranking of risks. This conception is also contested. This methodological issue also applies to judges.

This Emerging Systemic Litigation is and will be brought before various regulatory or supervisory Authorities, but also before the administrative and judiciary courts, in particular through Contract Law, Tort Law, Company Law, Labour Law, General Procedural Law, etc.

The aim here is to measure and anticipate the way in which the systemic dimension of these disputes will be incorporated into future litigation.

____

🧮Program of this manifestation:

Fourth conference-debate

L’INTELLIGENCE ARTIFICIELLE, NOUVEAU CHAMP DE CONTENTIEUX SYSTÉMIQUE

(ARTIFICIAL INTELLIGENCE, NEW FIELD OF SYSTEMIC LITIGATION)

Paris Court of Appeal, Cassin courtroom

Presentation and moderation by 🕴️Marie-Anne Frison-Roche, Professor of Regulatory & Compliance Law, Director of the Journal of Regulation & Compliance (JoRC)

🕰️11am.-11.10am. 🎤Les deux rencontres entre l'intelligence artificielle et le Contentieux Systémique (The two meetings between Artificial Intelligence and Systemic Litigation), by 🕴️Marie-Anne Frison-Roche, Professor of Regulatory & Compliance Law, Director of the Journal of Regulation & Compliance (JoRC)

🕰️11.10am-11.30am. 🎤Les premiers contentieux systémiques observables impliquant l’intelligence artificielle (The first observable Systemic Litigations involving artificial intelligence), by 🕴️Sonia Cissé, Partner, Linklaters Paris

🕰️11.30am-11.50am. 🎤L’influence des nouveaux textes et des textes à venir sur les contentieux systémiques émergents impliquant l’intelligence artificielle (The influence of new and forthcoming legislation on Emerging Systemic Litigation involving artificial intelligence), by 🕴️Emmanuel Netter, Professor of Law at Strasbourg University

🕰️11.50am-12.30pm. Debate

____

🔴Registrations and information requests can be sent to: inscriptionscse@gmail.com

🔴For the attorneys, registrations have to be sent to the following address: https://evenium.events/cycle-de-conferences-contentieux-systemique-emergent/

⚠️The conference-debates are held in person only, in the Cour d’appel de Paris (Paris Court of Appeal).

____

🧮Read below the report made of this event by Marie-Anne Frison-Roche⤵️

June 13, 2024

Thesaurus : 06.1. Textes de l'Union Européenne

► Full Reference: Regulation (EU) 2024/1689 of the European Parliament and of the Council of 13 June 2024 laying down harmonised rules on artificial intelligence and amending Regulations (EC) No 300/2008, (EU) No 167/2013, (EU) No 168/2013, (EU) 2018/858, (EU) 2018/1139 and (EU) 2019/2144 and Directives 2014/90/EU, (EU) 2016/797 and (EU) 2020/1828 (Artificial Intelligence Act - "IA Act")

____

________

June 3, 2024

Thesaurus : Convention, contract, settlement, engagement

► Référence complète : N. Yax, H. Anlio & S. Palminteri, "Studying and improving reasoning in humans and machines", Communications Psychology volume 2, Article number: 51 (2024)

____

May 30, 2024

Thesaurus : Doctrine

► Référence complète : Th. Douville, "De l'approche extensive de la prise de décision exclusivement automatisée (à propos du refus d'un prêt fondé sur une note de solvabilité communiquée par un tiers)", D. 2024, pp. 1000-1004

____

🦉Cet article est accessible en texte intégral pour les personnes inscrites aux enseignements de la Professeure Marie-Anne Frison-Roche

________

May 27, 2024

Organization of scientific events

► Full Reference: Les contrôles techniques des risques présents sur les plateformes et les contentieux engendrés (Technical risks controls on platforms and disputes arising from them), in cycle of conference-debates "Contentieux Systémique Émergent" ("Emerging Systemic Litigation"), organised on the initiative of the Cour d'appel de Paris (Paris Cour of Appeal), with the Cour de cassation (French Court of cassation), the Cour d'appel de Versailles (Versailles Court of Appeal), the École nationale de la magistrature - ENM (French National School for the Judiciary) and the École de formation des barreaux du ressort de la Cour d'appel de Paris - EFB (Paris Bar School), under the scientific direction of Marie-Anne Frison-Roche, May 27, 2024, 9h-10h30, Cour d'appel de Paris, Cassin courtroom

____

🧮see the full programme of the cycle Contentieux Systémique Émergent (Emerging Systemic Litigation)

____

🌐see on LinkedIn the report of this event

____

🧱read below the report of this event⤵️

____

► Presentation of the conference: The digital space is full of risks. Some are naturally associated with it, because it is an area of freedom, while others must be countered because they are associated with generally prohibited behaviour, such as money laundering. But the digital space has developed risks which, because of their scale, have been transformed in their very nature: this is particularly true of the distortion permeating certain content and the insecurity that can threaten the entire system itself. Law has therefore entrusted operators themselves with vigilance over what have become ‘cyber-risks’, such as the risk of disinformation, the risk of destruction of communication infrastructures, and the risk of data theft, a systemic prospect that can lead to the collapse of societies themselves.

New legislations has been drafted, in particular the Digital Services Act (DSA), to increase the burdens and powers of firms in this area, with digital companies in the front line, but also supervisory authorities such as the Autorité de régulation de la communication audiovisuelle et numérique - Arcom (French Regulatory Authority for Audiovisual and Digital Communication). The resulting disputes, in which firms and regulators may be allies or opponents, are systemic in nature.

The judge's handling of these "systemic cases", through the procedure and the solutions, must respond to this systemic dimension. The "pornographic websites" case, which is currently unfolding, provides an opportunity to observe in vivo the dialogue between judges when a "systemic case" imposes itself on them.

____

🧮Programme of this event:

Third conference-debate

LES CONTRÔLES TECHNIQUES DES RISQUES PRÉSENTS SUR LES PLATEFORMES ET LES CONTENTIEUX ENGENDRÉS

(TECHNICAL RISKS CONTROLS ON PLATFORMS AND DISPUTES ARISING FROM THEM)

Paris Court of Appeal, Cassin courtroom

Moderated by 🕴️Marie-Anne Frison-Roche, Professor of Regulatory and Compliance Law, Director of the Journal of Regulation & Compliance (JoRC)

🕰️9h-9h10. 🎤Le contentieux Systémique Emergent du fait du système numériqué (Systemic Litigation Emerging from the Digital System), 🕴️Marie-Anne Frison-Roche

🕰️9h10-9h30. 🎤Les techniques de gestion du risque systémique pesant sur la cybersécurité des plateformes (The Systemic Obligation of Security on Platforms and associated Litigation), 🕴️Michel Séjean, Professor of Law at Sorbonne Paris Nord University

🕰️9h30-9h50. 🎤Un cas systémique in vivo : le cas dit des sites pornographiques (An in vivo Systemic Case: the so-called case of pornographic websites),🕴️Marie-Anne Frison-Roche

🕰️9h50-10h10. 🎤Les obligations systémiques des opérateurs numériques à travers le Règlement sur les Services Numériques (RSN/DSA) et le rôle des régulateurs (Systemic Obligations of Operators (DSA) and the role of Regulators), 🕴️Roch-Olivier Maistre, Chair of the Autorité de régulation de la communication audiovisuelle et numérique - Arcom (French Regulatory Authority for Audiovisual and Digital Communication)

🕰️10h10-10h30. Debate

____

🔴Registrations and information requests can be sent to: inscriptionscse@gmail.com

🔴For the attorneys, registrations have to be sent to the following address: https://evenium.events/cycle-de-conferences-contentieux-systemique-emergent/

⚠️The conference-debates are held in person only, in the Cour d’appel de Paris (Paris Court of Appeal).

____

🧱read below a detailed presentation of this event⤵️

________